If you are a Telecom, Internet or Cloud services company and facing challenges with growing volume of transactions, this blog is for you. The billing and call charging systems are well tested for handling certain amount of load and data, and when your volumes grow beyond this, it can lead to extreme slowness of the batch processes that generate invoices, or that charge the call data records (CDR). This has potential to impact your billing cycles, sending invoices to your customers on stipulated dates and times, and affects your end user experience.

It is said, some problems are “good to have”. Definitely you are happy as a company to see increased business that comes with increased volumes, but you cannot ignore this problem for long as it may quickly grow into a big issue that creates wrong notions, increased support for CSRs in resolving issues with invoices or delays and backlog to work through with number of related operational tasks and activities getting delayed, such as the accounting hand-off, revenue realisation and reporting to the top management.

So how can your batch processes scale and process increased volumes of data faster? If you are looking for solutions in your product or system to improve and scale performance of the the batch processes such as invoicing or usage charging, obviously you would start looking for a technology expert with the relevant experience. It is however not that easy to find the person with domain experience and have a team at your disposal who can understand the domain very well and apply technology to resolve the domain problems. With batch processes becoming slower, the real challenge then becomes twofold:

- To find the right personnel with the relevant technology experience.

- To make sure that the technical team while resolving the batch process performance issues do not cause an adverse functional impact which can lead to even more challenges..

Let’s say you now have moved through that first hurdle of having engaged with the right team. The direction and solution they provide needs to be a long-term one, standing the test of time. What I mean by this is, if the solution involves writing custom pieces of programs in your batch processes, then you would always need personnel aware of that implementation in order to maintain and support those batch programs in future. Also, any use of options like custom multi-threading solution to speed up a batch process can be very deceptive, providing immediate gains in the short term but potentially creating a lot more problems in the long run – such as the inconsistent results in some use cases that are hard to reproduce problems, or getting erroneous results with the change of environment or system upgrades.

As you interact with technical architects on these issues, they would definitely guide you well to move to using frameworks that provide seamless solutions in parallel processing. For the Java based technology stack, the frameworks such as Spring Batch offer the best in class solutions and options for scaling up your batch processes. The advantages of going for a framework such as Spring Batch are many – the key ones being – you are using a well tested framework that is being used by many organisations, you have developers who are aware of the framework, so it’s not your custom logic to implement parallel processing, and it will be free of any problems that may creep in into your custom solutions such as incorrect transaction or exception handling issues. The best part is that you do not code into your system logic for parallel processing of data, but simply configure how you would partition your data, how many servers you would use for processing, how many threads you would configure on each server, and so on. This way you segregate the infrastructure support from your application’s business logic. You delegate the responsibility of achieving parallel processing to Spring Batch and let your application developers focus more on piece of code that Spring Batch will execute on each server to process the data.

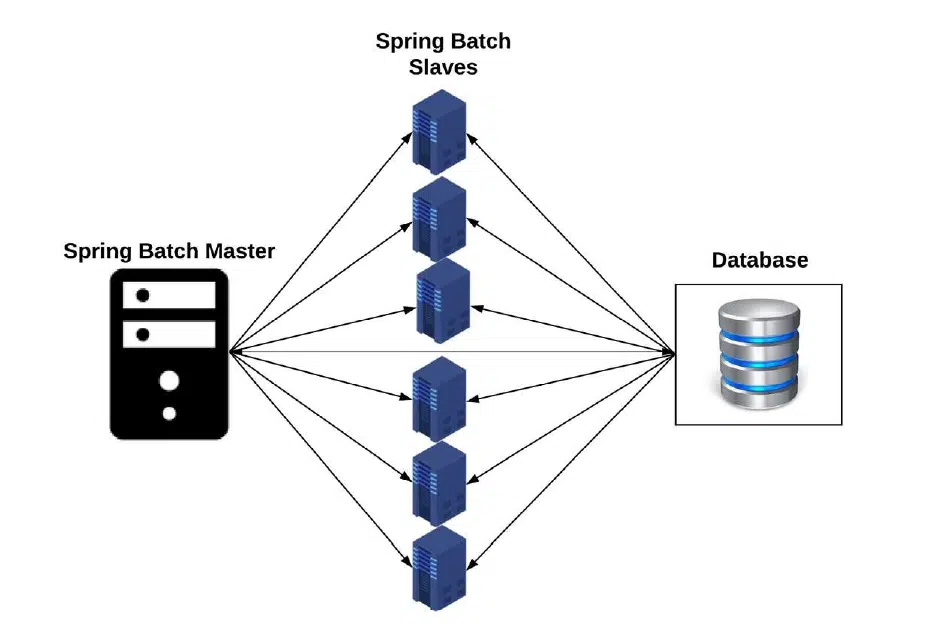

Multi-node parallel processing using Spring Batch:

The diagram depicts in a simplistic way how the master-slave processing strategy works with Spring Batch. It partitions the data into multiple nodes and passes chunks of data onto each slave node for doing the real work. The master node is the controller and does the partitioning work and leaves the work to the slave nodes.

The use of cloud based deployments for servers has also given the multi node parallel processing paradigm a boost, as the server costs are very less and affordable with clouds such as the AWS. What’s more is that you could bring up the server instances on your cloud dynamically when there is more data volumes to process. This dynamic scaling gives you the value-for-money proposition, as you process faster pressing into action more servers when you need, only to release those servers once they have done their job. This strategy boosts performance of your batch processes, and also saves you costs of not running extra servers when they are not really needed.

It is imperative for organisations to make full use of parallel processing frameworks such as the Spring Batch, and club them with cloud based systems such as AWS to meet the scaling requirements for your business. The best part of such solution is the long term aspects of this solution. When you implement it, you test it and certify it for the load that you may incur 5 years or even 10 years down the line. Once you are done with it, rest be assured of not having to face any issue for years to come – make sure you implement and test it with horizontal scaling (adding more servers in parallel to your fleet should process more and more data volumes).

More Blogs

Get A New Online Charging System With A 100% Up Time Guarantee

The Up time guarantee of the OCS system (or the prepaid billing system) is very important. To ensure this, the system needs to support the high availability aspect.

Read More…

EarnBill’s New Automated Payment Collection and Reconciliation module ....

The businesses like SaaS, IaaS, OTT & IoT are businesses that offer subscriptions and all requiring Automated Payment Collection & Reconciliation

Read More…

7 Critical Reasons Why Revenue Leakage Happens Through Billing Software

Revenue leakage can occur when billing software makes incorrect or inaccurate calculations. The system may have bugs or design limitations.

Read More…